Java.io.FileNotFoundException: Could not locate Hadoop executable: hadoop-3.0.0 bin winutils.exe. Refer to the following page to fix the problem. Hadoop Winutils License: Apache: Date (Dec 29, 2014) Files: pom (434 bytes) zip (1.0 MB) View All: Repositories: Mapr: Note: There is a new version for this artifact. 16/02/26 18:29:33 INFO SparkContext: Created broadcast 0 from textFile at FrameDemo.scala:13 16/02/26 18:29:34 ERROR Shell: Failed to locate the winutils binary in the hadoop binary path java.io.IOException: Could not locate executable null bin winutils.exe in the Hadoop binaries. As I did not have a way to update my last comment I'm adding a new one. Regarding the winutils.exe maybe being wrong version. At the command line I have issued the command winutils.exe systeminfo.

- Status:Closed

- Resolution: Not A Problem

- Fix Version/s: None

- Labels:

C:UsersWEI>pyspark

Python 3.5.6 |Anaconda custom (64-bit)| (default, Aug 26 2018, 16:05:27) [MSC v.

1900 64 bit (AMD64)] on win32

Type 'help', 'copyright', 'credits' or 'license' for more information.

2018-09-14 21:12:39 ERROR Shell:397 - Failed to locate the winutils binary in th

e hadoop binary path

java.io.IOException: Could not locate executable nullbinwinutils.exe in the Ha

doop binaries.

at org.apache.hadoop.util.Shell.getQualifiedBinPath(Shell.java:379)

at org.apache.hadoop.util.Shell.getWinUtilsPath(Shell.java:394)

at org.apache.hadoop.util.Shell.<clinit>(Shell.java:387)

at org.apache.hadoop.util.StringUtils.<clinit>(StringUtils.java:80)

at org.apache.hadoop.security.SecurityUtil.getAuthenticationMethod(Secur

ityUtil.java:611)

at org.apache.hadoop.security.UserGroupInformation.initialize(UserGroupI

nformation.java:273)

at org.apache.hadoop.security.UserGroupInformation.ensureInitialized(Use

rGroupInformation.java:261)

at org.apache.hadoop.security.UserGroupInformation.loginUserFromSubject(

UserGroupInformation.java:791)

at org.apache.hadoop.security.UserGroupInformation.getLoginUser(UserGrou

pInformation.java:761)

at org.apache.hadoop.security.UserGroupInformation.getCurrentUser(UserGr

oupInformation.java:634)

at org.apache.spark.util.Utils$$anonfun$getCurrentUserName$1.apply(Utils

.scala:2467)

at org.apache.spark.util.Utils$$anonfun$getCurrentUserName$1.apply(Utils

.scala:2467)

at scala.Option.getOrElse(Option.scala:121)

at org.apache.spark.util.Utils$.getCurrentUserName(Utils.scala:2467)

at org.apache.spark.SecurityManager.<init>(SecurityManager.scala:220)

at org.apache.spark.deploy.SparkSubmit$.secMgr$lzycompute$1(SparkSubmit.

scala:408)

at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSub

mit$$secMgr$1(SparkSubmit.scala:408)

at org.apache.spark.deploy.SparkSubmit$$anonfun$doPrepareSubmitEnvironme

nt$7.apply(SparkSubmit.scala:416)

at org.apache.spark.deploy.SparkSubmit$$anonfun$doPrepareSubmitEnvironme

nt$7.apply(SparkSubmit.scala:416)

at scala.Option.map(Option.scala:146)

at org.apache.spark.deploy.SparkSubmit$.doPrepareSubmitEnvironment(Spark

Submit.scala:415)

at org.apache.spark.deploy.SparkSubmit$.prepareSubmitEnvironment(SparkSu

bmit.scala:250)

at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:171)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:137)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

2018-09-14 21:12:39 WARN NativeCodeLoader:62 - Unable to load native-hadoop lib

rary for your platform.. using builtin-java classes where applicable

Setting default log level to 'WARN'.

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLeve

l(newLevel).

Welcome to

____ __

/ _/_ ___ ____/ /_

/ _ / _ `/ __/ '/

/__ / ._/_,// //_ version 2.3.1

/_/ Elite dangerous anti aliasing.

Python 3.5.6 |Anaconda custom (64-bit)| (default, Aug 26 2018, 16:05:27) [MSC v.

1900 64 bit (AMD64)] on win32

Type 'help', 'copyright', 'credits' or 'license' for more information.

2018-09-14 21:12:39 ERROR Shell:397 - Failed to locate the winutils binary in th

e hadoop binary path

java.io.IOException: Could not locate executable nullbinwinutils.exe in the Ha

doop binaries.

at org.apache.hadoop.util.Shell.getQualifiedBinPath(Shell.java:379)

at org.apache.hadoop.util.Shell.getWinUtilsPath(Shell.java:394)

at org.apache.hadoop.util.Shell.<clinit>(Shell.java:387)

at org.apache.hadoop.util.StringUtils.<clinit>(StringUtils.java:80)

at org.apache.hadoop.security.SecurityUtil.getAuthenticationMethod(Secur

ityUtil.java:611)

at org.apache.hadoop.security.UserGroupInformation.initialize(UserGroupI

nformation.java:273)

at org.apache.hadoop.security.UserGroupInformation.ensureInitialized(Use

rGroupInformation.java:261)

at org.apache.hadoop.security.UserGroupInformation.loginUserFromSubject(

UserGroupInformation.java:791)

at org.apache.hadoop.security.UserGroupInformation.getLoginUser(UserGrou

pInformation.java:761)

at org.apache.hadoop.security.UserGroupInformation.getCurrentUser(UserGr

oupInformation.java:634)

at org.apache.spark.util.Utils$$anonfun$getCurrentUserName$1.apply(Utils

.scala:2467)

at org.apache.spark.util.Utils$$anonfun$getCurrentUserName$1.apply(Utils

.scala:2467)

at scala.Option.getOrElse(Option.scala:121)

at org.apache.spark.util.Utils$.getCurrentUserName(Utils.scala:2467)

at org.apache.spark.SecurityManager.<init>(SecurityManager.scala:220)

at org.apache.spark.deploy.SparkSubmit$.secMgr$lzycompute$1(SparkSubmit.

scala:408)

at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSub

mit$$secMgr$1(SparkSubmit.scala:408)

at org.apache.spark.deploy.SparkSubmit$$anonfun$doPrepareSubmitEnvironme

nt$7.apply(SparkSubmit.scala:416)

at org.apache.spark.deploy.SparkSubmit$$anonfun$doPrepareSubmitEnvironme

nt$7.apply(SparkSubmit.scala:416)

at scala.Option.map(Option.scala:146)

at org.apache.spark.deploy.SparkSubmit$.doPrepareSubmitEnvironment(Spark

Submit.scala:415)

at org.apache.spark.deploy.SparkSubmit$.prepareSubmitEnvironment(SparkSu

bmit.scala:250)

at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:171)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:137)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

2018-09-14 21:12:39 WARN NativeCodeLoader:62 - Unable to load native-hadoop lib

rary for your platform.. using builtin-java classes where applicable

Setting default log level to 'WARN'.

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLeve

l(newLevel).

Welcome to

____ __

/ _/_ ___ ____/ /_

/ _ / _ `/ __/ '/

/__ / ._/_,// //_ version 2.3.1

/_/ Elite dangerous anti aliasing.

Using Python version 3.5.6 (default, Aug 26 2018 16:05:27)

SparkSession available as 'spark'.

>>>

SparkSession available as 'spark'.

>>>

- Assignee:

- Unassigned

- Reporter:

- WEI PENG

- Votes:

- 0Vote for this issue

- Watchers:

- 3Start watching this issue

- Created:

- Updated:

- Resolved:

This tutorial aims to provide a step by step guide to Build Hadoop from Hadoop source on Windows OS. Tutorial for Building Hadoop 2.7.2 for Windows with Native Binaries. Documented tutorial link: Hadoop installation on windows Without Cygwin Solution for Spark Error: Many of you may tried running spark on Windows OS and faced below error on console.

Winutils For Hadoop Download

This is because your hadoop distribution does not contains native binaries for Windows OS as they are not included in official Hadoop Distribution. So you need to build hadoop from its source for your OS. Climaxdigital vcap800 vhs camcorder usb video capture camera. Error like: 16/04/02 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform. Using builtin-java classes where applicable 16/04/02 ERROR Shell: Failed to locate the winutils binary in the hadoop binary path java.io.IOException: Could not locate executable null bin winutils.exe in the Hadoop binaries. Solution for Hadoop Error: This error is also related to the Native Hadoop Binaries for Windows OS. So solution is same as above Spark problem that you need to build it for your Windows OS from Hadoop Source code. Error Like 16/04/03 ERROR util.Shell: Failed to locate the winutils binary in the hadoop binary path java.io.IOException: Could not locate executable C: hadoop bin winutils.exe in the Hadoop binaries.

Winutils Exe Download

Winutils Exe Hadoop For Mac Free

So just follow this tutorial video and at the end you will be able to get rid of these errors. Build Command Used: mvn package -Pdist,native-win -DskipTests -Dtar Download Links: 1. Download Hadoop source from 2. Download Microsoft.NET Framework 4 (Standalone Installer) from 3.

Download Windows SDK 7 Installer from or you can also use offline installer ISO from You will find 3 different ISO’s to download. GRMSDKENDVD.iso (x86) b. GRMSDKXENDVD.iso (AMD64) c. GRMSDKIAIENDVD.iso (Itanium) Please choose based on your OS type.

YouTube Downloader is a free online video download service that makes it easy to download your favorite YouTube videos to iPhone, iPad, Mac or PC. 4K Video DownloaderMP3 DownloaderVideo Downloader. Jan 21, 2020 From Digiarty Software: MacX YouTube Downloader is a great free online video/audio downloader for Mac OS that can download music and videos from over 300 online video sites. As a powerful free. Jun 21, 2020 ClipGrab for Mac is one of the very few free YouTube downloaders for Mac. Of course, being a free tool, it’s some downsides, but they don’t matter much. You can use ClipGrab for Mac for downloading videos at up to full HD quality. An integrated Search feature makes it really easy to find and download videos. Video downloader for mac free. Free YouTube Downloader for Mac automatically detects the videos on YouTube opened in Safari, Chrome or Firefox and allows you to download YouTube videos free with a single click. It supports downloading YouTube videos in batch and has the ability to shut down your Mac or let it enter sleep mode when the download is finished. Oct 19, 2019 WinX is one of the best and the simplest YouTube downloader for Mac out there. All you have to do is visit the official YouTube website and copy the URL of the video you want to download and press Analyze. You will get to choose the format and the resolution of the video, including 4K if available. It can also download videos from other websites.

Download JDK according to your OS & CPU architecture from 5. Download and install 7-zip from 6. Download & extract Maven 3.0 or later from 7. Download ProtocolBuffer 2.5.0 from 8. Download CMake 3.5.0 or higher from 9. Download Cygwin installer from Official Hadoop On Windows Configuration guide: Official Hadoop building guide.

Download Apache Hadoop Sandbox, Hortonworks Data Platform (HDP) and DataFlow (HDF) and get access to release notes, installation guides, tutorials and more.

In this post, i am going to show you how to setup Spark without Hadoop in standalone mode in windows. Step 1: Install JDK (Java Development Kit) Download JDK7 or later from and note the path where you installed. Step 2: Download Apache Spark Download a pre-built version of Apache Spark archive from. Extract the downloded Spark archive and note the path where you extracted.

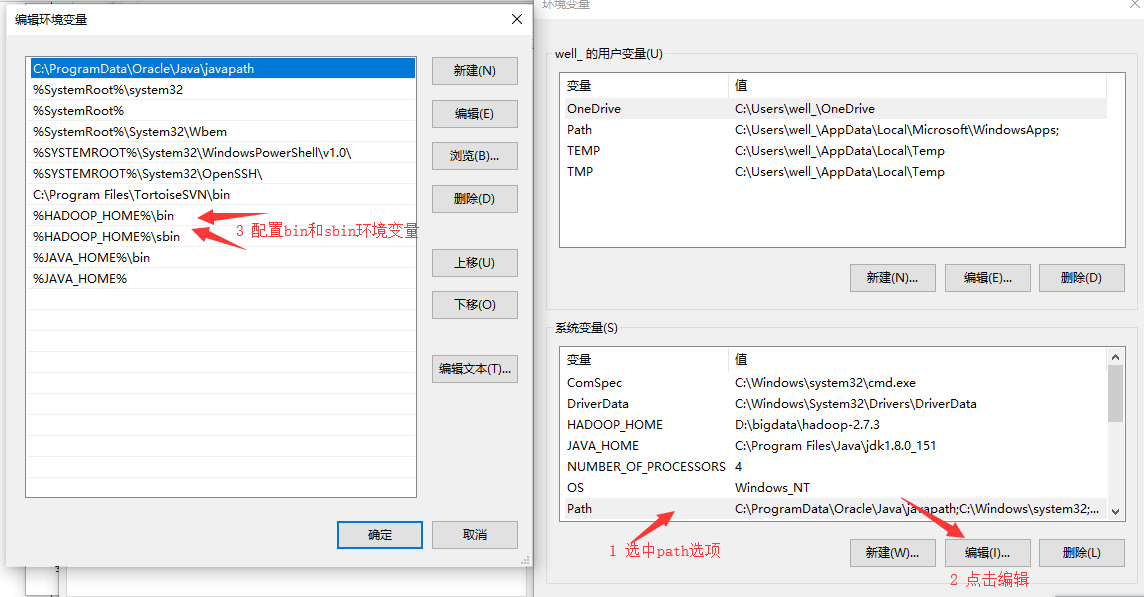

(for example C: devtools spark) Step 3: Download winutils.exe for Hadoop Though we are not using Hadoop, spark throws error 'Failed to load the winutils binary in the hadoop binary path'. So download winutils.exe from and place it into a folder (for example C: devtools winutils bin winutils.exe) Note: winutils.exe utility may varies with OS. If it doesn't support to your OS, find supporting one from and use. Step 4: Create Environment Variables Open Control Panel - System and Security - Click on 'Advanced System Settings' - Click on 'Environment Variables' button. Add the following new USER variables: JAVAHOME (C: Program Files Java jdk1.8.0101) SPARKHOME ( C: devtools spark) HADOOP HOME (C: devtools winutils) Step 5: Set Classpath Add following paths to your PATH user variable:%SPARKHOME% bin%JAVAHOME% bin Step 6: Now Test it out! Open command prompt in administrator mode. Move to path where you setup the spark (i.e, C: devtools spark) 3.

Winutils Hadoop 3.2.1

Check for a text file to play with like README.md 4. Type spark-shell to enter spark-shell 5. Execute following statements val rdd = sc.textFile('README.md') rdd.count You should get count of the number of lines in that file. Congratulations, you setup done and successfully run first Spark program also:) Enjoy Spark!